Hello,

I have two python scripts and I want to put them in a docker container. The output of one python script is written on a text file and this text file is read by the second code which is a dash-plotly dashboard.

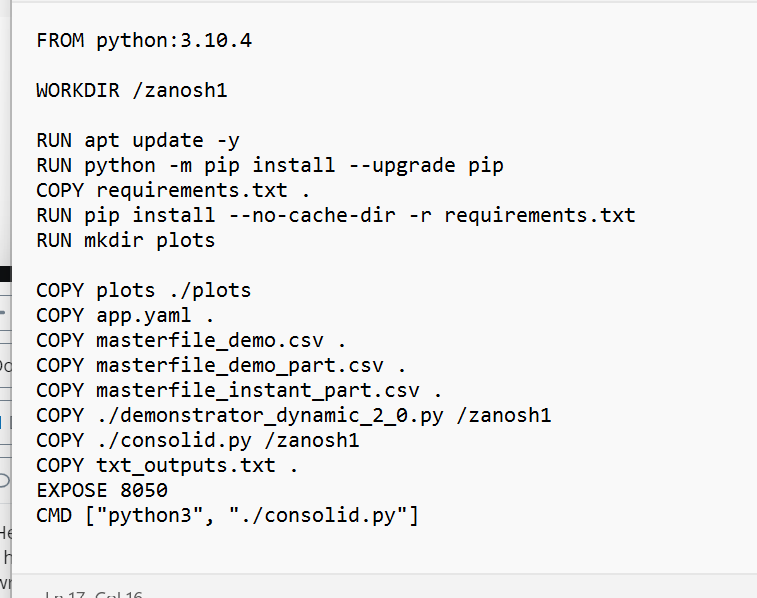

My task now is to containerize them by docker. I build, run and curl docker container but it seems that the data is not sent properly. I attach the Dockerfile in this question. consolid.py is the code for dashboard and demonstrator_dynamic_2_0.py is the code that I need its output to run the dashboard. The output of one is input for the other. Can I put them in one container or should I create two and how?

I hope I could get a clue for my question.

Everything depends on what these scripts do and when they need to run. If your first script needs to run only once before the second script, you can call the first python script in a shell script and run the second after the first exited. Use exec in the shell script so it can become the first pocess inside the container having PID 1 which gets the signals when you want to stop or kill a container:

python /app/first-script.py

exec python /app/second-script.py

If you need both scripts running in the background, I would run two containers using the same volume.

Hello and thanks for the reply.

The problem is that when I run the first code in the run wizard it starts listening and waits for the user to insert a csv file and after I insert data it gives the output.

Then I run the dashboard code. And before all this I choose the 'Allow parallel run ’ in Pycharm. Did you see my Dockerfile? Do I need to change something there?

what do you mean by shell script? Do you mean terminal? What PID represents for?

You have several choices. It sounds like you have a two step process so one option is to build a UI that allows users to upload their data file to be processed and then have it shown on the dashboard. You could do this with a Python web framework like Flask.

If you want to continue to run it has two separate Python files then you will need to call the container twice, once to create the file and again to display the dashboard. I would modify the demonstrator_dynamic_2_0.py file to take the input file as an argument if it doesn’t already. Then, assuming you tag your Docker image as dashboard and your data file is in the current folder and called my_data.csv the commands would be:

$ docker run --rm -v $(pwd):/data dashboard demonstrator_dynamic_2_0.py /data/my_data.csv

$ docker run -p 8050:8050 dashboard

The first run will map your current directory into /data inside the container so that demonstrator_dynamic_2_0.py can read the data file from there and do it’s processing. The second run will run the dashboard which is the default container behavior.

My OS is windows. will these commands work on windows?

and maybe more explanation: I run the first code it waits for a csv file to digest and gives a txt file as output. The output are parameters that are used to create visualizations and generally the dashboard. Can I send my codes for testing by you?

Yes, these commands should work fine on Windows. That’s one of the benefits for using docker; it uses the same commands regardless of platform. All of the commands to the container, are executed inside of the docker container which is running Debian Linux so paths like /data are the same regardless of the host OS.

You mentioned that your current code runs a wizard that waits for user input. That would need to change because there is no way to interact with the wizard inside the container. You would need to modify your code to take the datafile as an argument from the command line interface. This is what my command example assumes.

If you have your code on GitHub I’d be happy to clone it and test it once you make those changes.

I would need your github account then to share the repository with you please!

As I wrote in my previous comment:

So you run two containers. Using the same volume. Maybe you didn’t notice, but the last word is a link. Click on “volume” in the quoted comment to learn more about volumes.

I would change many things, but this topic is not about those small mistakes. The idea is running those python scripts in different containers and the way to do that is what @rofrano suggested. Except that my idea was to use the same volume in both containers, but there is no volume definition for the second container in his example.

which I would use like this:

docker run --rm -v $(pwd):/data dashboard demonstrator_dynamic_2_0.py /data/my_data.csv

docker run -v $(pwd):/data -p 8050:8050 dashboard

Based on your discussion, it would not work for you, but in order to help you, you should be more specific. Sharing repositories can help, but I prefer to see only the relevant parts so I don’t need to go through an entire source code trying to understand how that works and why.

The problem is that you never said how that script waits for the input. Is it a webapp with an HTML form? Is it reading the standard input? How exactly does that waiting work?

If it is a webapp, you can simply run it in detached mode (docker run -d) and if it reads the standard input, you can run the container with an interactive terminal

docker run --rm -it ...

An example how it works in bash, not python:

docker run --rm -it ubuntu:22.04 sh -c 'read -r -p "Name: " name && echo "Hello $name"'

Name: Ákos

Hello Ákos

Note that, you can’t always use applications in containers the same way as you did on the host machine, so the ways we are suggesting are not necessarily the best ways in practice. However, I think you should understand how containers work, before you start to perfectly containerize your software.

PID = Process ID

Every container (almost) uses the ID 1 for the first process running inside the container and it will be the parent of every other process.

I don’t understand the question about the shell script and terminal. I meant shell script, since I guess you run Linux containers on Docker Desktop, right? Sou you could write a shell script with a similar content I wrote in my previous comment. If your first script is reading the standard input, it could ask for the CSV, create the file, stop and the next script could start.

My GitHub account is: rofrano. The bigger questions is what do you plan to do with this? Are you going to deploy it somewhere? Will it be a web site? Or do you expect people to use it as a command line utility and run two separate commands? Or is it just for you to play around with? What is the user experience?

If you expect people to use it as a web site, I would design it so that they are presented with a web page to upload their data, process it, and then redirect to the web page to view the dashboard. As suggested in my first post, create a simple web site with Flask and call your two Python modules from there.

Hello, I have this error when I use the two run commands you gave me,

docker: Error response from daemon: failed to create shim task: OCI runtime create failed: run create failed: unable to start container process: exec: “demonstrator_dynamic_2_0.py”: executable file not found in $PATH: unknown.

Also I added a new zip file to the IR analysis dashboard, this is the last update, thanks to have a look at it.

Sorry about that. I thought you had python as an ENTRYPOINT in your Docker image but in looking at your Dockerfile again, I see now that you used CMD. So you would need to add the call to python as well like this for the first run:

$ docker run --rm -v $(pwd):/data dashboard python demonstrator_dynamic_2_0.py /data/my_data.csv

As @rimelek mentioned, you will need a shared volume so that the second run of the container can find the data from the first. Sorry I forgot to point that out. It could be as simple as sharing that /data folder again but I don’t know where your application is looking for its data so I can’t really recommend.

If you change your second script to look in a folder called /data then you could use something like this:

$ docker run -v $(pwd):/data -p 8050:8050 dashboard

That will map your current folder which should now have the data after the first run, into /data in the container. As I said, you would have to modify your consolid.py file to look for its data files in the /data folder.

Dear Mr. Rofrano I shared a code with you in Github. Can you have a look at that please?

And tell me please how to optimize it with curl? is there a docker curl command instead of a docker exec? My prof’s idea is somehow as yours to have a data folder and curl data as we want to put the image in edge device and after that in cloud what is the best way to change it for this aim ?

My application has four tabs the data for the first two tabs comes from plots folder and for the tab 3 and 4 comes from txt_outputs.txt and masterfile_instant_part.csv

@rimelek I want to share with you my code and I need your ID in github. Thanks

But I would not like you to share it with me as I wrote it in the other topic that I closed:

When I have a little time, I read/answer public posts. Thank you for your understanding.

@rofrano Can you please check your github account?